Blogs

Local vs Global Web Hosting: Which One Should You Get and Why?

March 18, 2026

Dedicated Server for Big Data Analytics: Architecture, Performance, and Deployment at Scale

March 21, 2026NVIDIA GTC 2026: 10 Biggest Announcements That Define the Future of AI Infrastructure

-

NVIDIA GTC 2026 : 10 Biggest Announcements

- 1. BlueField-4 STX: A New AI Storage Architecture

- 2. Rack-Scale AI With CMX Systems

- 3. The Shift to AI Inference Dominance

- 4. New AI Chip Strategy: Vera CPU + Rubin GPUs + Groq Integration

- 5. The “Feynman” Roadmap for Next-Gen AI Chips

- 6. AI Becomes a Full-Stack Platform

- 7. Explosion of Storage Innovation Across Vendors

- 8. Agentic AI and Autonomous Systems Take Center Stage

- 9. Networking Becomes Critical: The Rise of AI Interconnects

- 10. AI Factories and Global Infrastructure Expansion

- Conclusion

Key Takeaways:

1. From Training to Inference

AI is shifting from research to real-world deployment.

2. From GPUs to Full Systems

Compute, storage, networking and software are now deeply integrated.

3. From Servers to AI Factories

Infrastructure is scaling to industrial levels.

4. From Models to Autonomous Agents

AI systems are becoming active decision-makers.

5. From Compute Bottlenecks to Data Bottlenecks

Storage and data pipelines are now the critical constraint.

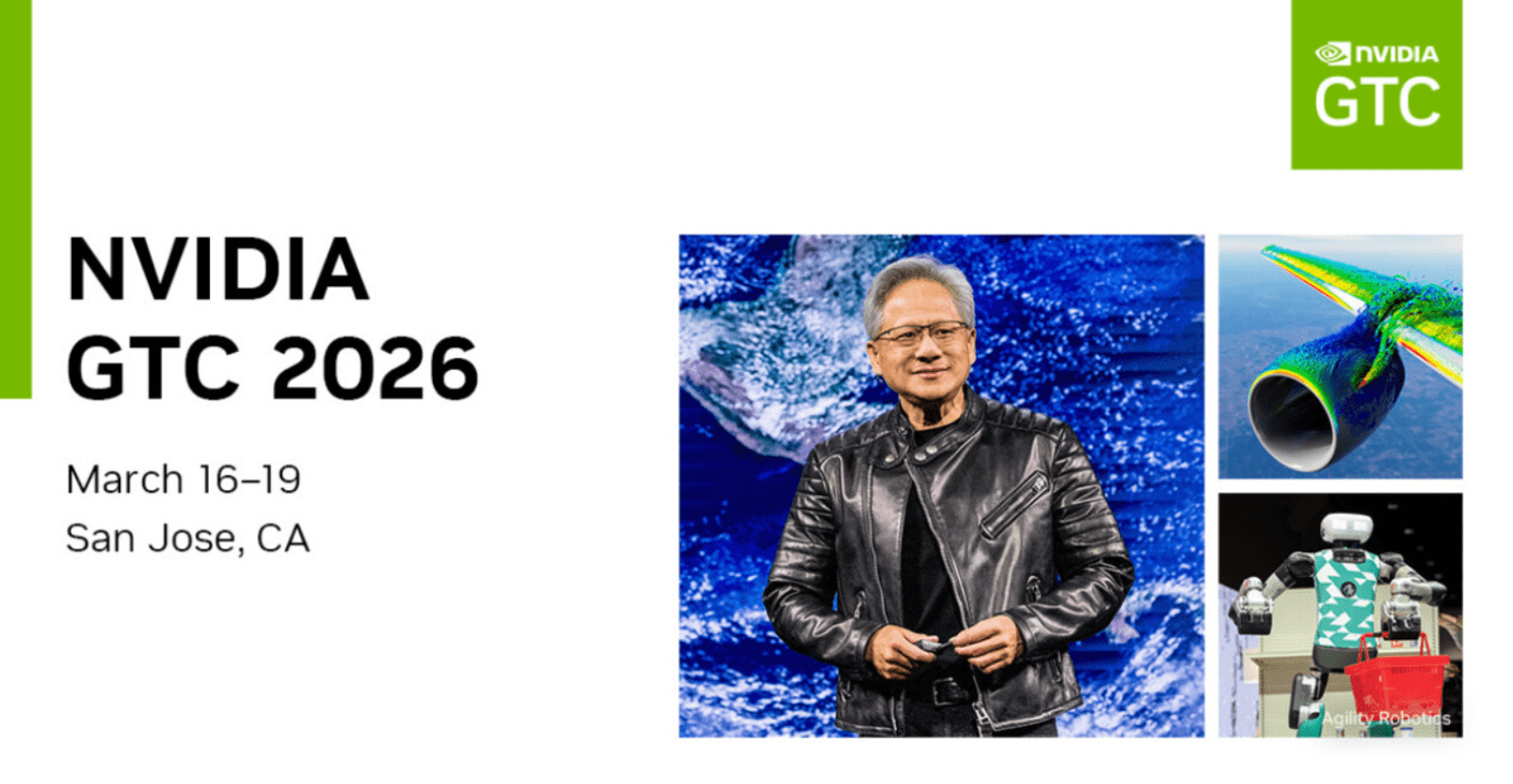

NVIDIA GTC 2026 wasn’t just another developer conference,it marked a turning point in how artificial intelligence infrastructure is designed, deployed, and scaled. Held from March 16–19 in San Jose, the event brought together over 30,000 attendees and featured more than 1,000 sessions across AI, robotics, data centers, and accelerated computing.

This year’s theme was clear: AI is no longer experimental,it is becoming core global infrastructure. From inference breakthroughs to storage reinvention, NVIDIA and its ecosystem partners unveiled technologies that reshape the entire AI stack.

NVIDIA GTC 2026 : 10 Biggest Announcements

Here are the 10 biggest announcements from NVIDIA GTC 2026 and why they matter.

1. BlueField-4 STX: A New AI Storage Architecture

One of the most significant announcements was NVIDIA’s BlueField-4 STX storage architecture, purpose-built for agentic AI systems.

This architecture addresses a growing problem: data bottlenecks starving GPUs. As large language models expand their context windows, traditional CPU-based storage pipelines can’t keep up.

STX solves this by:

- Enabling direct NVMe access

- Using DPUs (BlueField-4) to bypass CPU bottlenecks

- Delivering up to 5× token throughput and 4× energy efficiency

It also introduces compute-storage disaggregation, a concept increasingly central to AI infrastructure.

Why it matters:

AI performance is no longer just about GPUs,it’s about feeding them data efficiently. STX represents a foundational shift in how storage integrates with AI compute.

2. Rack-Scale AI With CMX Systems

Building on STX, NVIDIA introduced CMX at NVIDIA GTC 2026, a rack-scale AI infrastructure platform that integrates:

- Storage

- Networking

- Compute

- DPUs

Major enterprise vendors,including Dell, IBM, and NetApp,are already developing CMX-based systems.

Why it matters:

The industry is moving from individual servers to fully integrated AI racks, often called “AI factories.” CMX is NVIDIA’s blueprint for that future.

3. The Shift to AI Inference Dominance

Perhaps the biggest strategic shift announced at NVIDIA GTC 2026 was NVIDIA’s focus on AI inference, not just training.

CEO Jensen Huang projected that:

- AI infrastructure could become a $1 trillion market by 2027

- Inference workloads,running AI models in real time,will dominate demand

This shift reflects the reality that AI is moving into production at scale.

Why it matters:

Training built the AI boom,but inference will monetize it.

4. New AI Chip Strategy: Vera CPU + Rubin GPUs + Groq Integration

NVIDIA unveiled a new heterogeneous compute strategy at NVIDIA GTC 2026 combining:

- Vera CPUs

- Rubin GPUs

- Groq-based inference chips

In this architecture:

- Groq handles decode stages

- NVIDIA GPUs handle prefill stages

Why it matters:

NVIDIA is no longer relying solely on GPUs,it’s embracing specialized chip ecosystems to stay competitive.

5. The “Feynman” Roadmap for Next-Gen AI Chips

NVIDIA also previewed its future roadmap, including Feynman architecture, expected around 2028.

This follows:

- Hopper → Blackwell → Rubin → Feynman

Why it matters:

The roadmap signals NVIDIA’s long-term dominance strategy,and reassures investors that innovation won’t slow down.

6. AI Becomes a Full-Stack Platform

A major theme across the keynote was NVIDIA’s transformation into a full-stack AI company.

This includes:

- CUDA and CUDA-X software ecosystem

- AI Enterprise tools

- Networking (Spectrum-X)

- DPUs (BlueField)

- AI frameworks and models

NVIDIA emphasized that much of its software stack is free or open, accelerating adoption.

Why it matters:

The company is positioning itself as the “AWS of AI infrastructure”, not just a chip vendor.

7. Explosion of Storage Innovation Across Vendors

Storage vendors played a huge role at NVIDIA GTC 2026.

Companies like:

- Kioxia

- SanDisk

- Dell

- NetApp

showcased high-performance SSDs and AI-optimized storage systems designed for:

- Massive datasets

- Real-time inference

- Distributed AI pipelines

Storage is evolving toward:

- Disaggregated architectures

- NVMe-over-Fabrics

- GPU-direct storage

Why it matters:

AI infrastructure is becoming data-first, not compute-first.

8. Agentic AI and Autonomous Systems Take Center Stage

Another major focus was agentic AI,systems that can:

- Plan

- Reason

- Act autonomously

NVIDIA introduced new frameworks (like NemoClaw) to support:

- Multi-agent systems

- AI autonomy

- Enterprise deployment with safety controls

Why it matters:

We are moving beyond chatbots toward fully autonomous AI systems.

9. Networking Becomes Critical: The Rise of AI Interconnects

A subtle but crucial theme: networking is now as important as compute.

Technologies like:

- Spectrum-X Ethernet

- ConnectX-9 SuperNICs

enable:

- High-speed data movement

- Low-latency GPU communication

- Distributed AI workloads

Industry analysts suggest a shift from:

“Compute is king” → “Interconnect is king”

Why it matters:

Without fast networking, even the best GPUs sit idle.

10. AI Factories and Global Infrastructure Expansion

NVIDIA emphasized the concept of AI factories,massive data centers dedicated to AI production.

This includes:

- Partnerships with cloud providers

- Investments in AI infrastructure companies

- Expansion into global markets like China

These AI factories will:

- Train models

- Run inference

- Power enterprise AI applications

Why it matters:

AI is becoming a utility,like electricity or the internet.

Conclusion

NVIDIA GTC 2026 made one thing clear: we are entering the “infrastructure phase” of AI.

In the early days, the focus was on building better models. Today, the challenge is:

- Scaling them

- Deploying them

- Feeding them data

- Running them efficiently in real time

NVIDIA’s announcements,from BlueField-4 STX to inference-focused chips,show a company evolving to meet that challenge head-on.

The most important takeaway is this:

AI is no longer just software, it is becoming the backbone of global digital infrastructure.

After NVIDIA GTC 2026, it’s clear that NVIDIA intends to build that backbone.

Which of these NVIDIA GTC 2026 announcements surprised you the most? Share it with us in the comments section below.

Featured Post

RSA Conference 2026: 10 Amazing New Cybersecurity Tools Announced

Table of Contents Key Takeaways 1. Autonomous Security Operations 2. AI as Both Threat and Defense 3. Identity and Access Evolution 4. Cloud and AI Convergence […]

Snowflake and OpenAI Seal $200M Strategic Alliance to Transform AI For Enterprise Data Platforms

Snowflake, the AI Data Cloud leader, and OpenAI this week announced a multi-year, $200 million strategic partnership that will bring advanced artificial intelligence models directly into […]

Cisco Live EMEA 2026: Powering the AI Era with Smarter Networks, Security, Collaboration and Agentic Intelligence

At Cisco Live EMEA 2026 in Amsterdam, Cisco unveiled a sweeping portfolio of innovations designed to transform enterprise infrastructure, secure AI-driven environments, and redefine workplace collaboration […]