Blogs

7 Ways To Keep Cloud Costs Under Control

February 20, 2026

Cisco Live EMEA 2026: Powering the AI Era with Smarter Networks, Security, Collaboration and Agentic Intelligence

February 25, 2026Microsoft Unveils Maia 200 AI Chip to Take On AWS, Google and Nvidia in Cloud AI Race

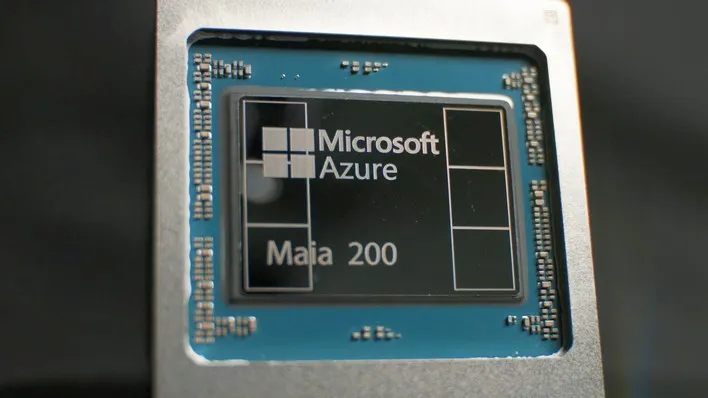

Microsoft Unveils Maia 200 AI Chip to Take On AWS, Google and Nvidia in Cloud AI Race. Microsoft has officially launched its next-generation AI accelerator chip, Maia 200, marking a bold step in the company’s strategy to challenge cloud rivals Amazon Web Services (AWS), Google Cloud and even dominant AI chip maker Nvidia.

The Maia 200 is a custom-designed AI inference accelerator built on Taiwan Semiconductor Manufacturing Co’s (TSMC) advanced 3-nanometer process, featuring more than 100 billion transistors and cutting-edge memory and data-movement technologies aimed at speeding up the execution of large AI models.

- Microsoft Unveils Maia 200 AI Chip: Performance and Competitive Positioning

- Microsoft Unveils Maia 200 AI Chip Which is Built for Inference and Cloud Scale

- Microsoft Unveils Maia 200 AI Chip: Deployment and Ecosystem Integration

- Microsoft Unveils Maia 200 AI Chip Strategic Implications Amid Intensifying AI Hardware Competition

Microsoft Unveils Maia 200 AI Chip: Performance and Competitive Positioning

Microsoft says Maia 200 delivers over 10 petaflops of compute at 4-bit precision (FP4) and more than 5 petaflops at 8-bit precision (FP8) — key metrics used to measure efficiency for AI workloads like language models and generative applications.

According to the company, these figures give Maia 200 a performance edge over rival hyperscaler silicon:

- Roughly three times the FP4 throughput of AWS’s Trainium3 chip,

- And FP8 performance that outpaces Google’s seventh-generation TPU in certain benchmarks.

Microsoft also claims the new accelerator delivers around 30 % better performance per dollar compared with its own previous generation hardware — a key measure of cost efficiency for cloud operators.

Microsoft Unveils Maia 200 AI Chip Which is Built for Inference and Cloud Scale

Unlike general-purpose GPUs, Maia 200 is specifically engineered for AI inference — the process of generating outputs once a model has been trained. This shift reflects broader industry trends as companies seek chips optimized for delivering real-world AI services quickly and efficiently.

The chip’s architecture includes a high-bandwidth memory subsystem composed of 216 GB of HBM3e memory capable of 7 terabytes per second of data throughput, plus on-chip SRAM and custom data-movement engines to keep large models fed with information.

Microsoft Unveils Maia 200 AI Chip: Deployment and Ecosystem Integration

Microsoft has already begun deploying Maia 200 in its Azure US Central data center near Des Moines, Iowa, with additional capacity planned for a facility in Arizona and beyond. Initial workloads include internal AI projects as well as customer-facing services such as Microsoft 365 Copilot and Azure AI Foundry model hosting.

In addition to the hardware, Microsoft is previewing a Maia Software Development Kit (SDK) designed to help developers optimize AI models for the new chip, with support for popular frameworks and tools like PyTorch and a Triton compiler.

Microsoft Unveils Maia 200 AI Chip Strategic Implications Amid Intensifying AI Hardware Competition

The launch positions Microsoft more directly in a competitive AI silicon landscape long dominated by Nvidia’s GPUs, which remain widely used across cloud providers and enterprise clusters. AWS and Google have been building their own custom chips for years such as AWS’s Trainium and Google’s TPUs but Microsoft’s push with Maia 200 signals a renewed effort to reduce reliance on third-party silicon and control more of its AI infrastructure stack.

Despite the performance claims, analysts note that Nvidia’s broader software ecosystem and established developer tools continue to be a strength for many AI customers, suggesting that custom silicon efforts from hyperscalers will complement, rather than outright replace, GPU-based systems in the near term.

Microsoft unveils Maia 200 AI chip to take On AWS, Google and Nvidia in cloud AI race. How will this move impact the industry as a whole? Share your feedback with us in the comments section below.

Featured Post

Cisco Live 2026: Agentic AI, Unified Operations and Quantum-Safe Security

At Cisco Live 2026, Cisco unveiled one of the most ambitious platform transformations in its history, signaling a future where artificial intelligence is not merely embedded […]

Dell Technologies World 2026: AI Factories, Agentic AI And The New Enterprise Infrastructure Race

Table of Contents Key Takeaways Dell AI Factory With NVIDIA Becomes The Centerpiece Deskside Agentic AI Signals A Major Shift Dell Pushes AI Beyond GPUs Into […]

IBM Think 2026: 10 Biggest Announcements

At IBM Think 2026, IBM laid out one of its most ambitious visions yet for enterprise artificial intelligence, hybrid cloud, and digital sovereignty. The event highlighted […]